OFF-PAGE SEO. SEO actions off-page.

24 July 2020

SEO friendly URLs

7 August 2020

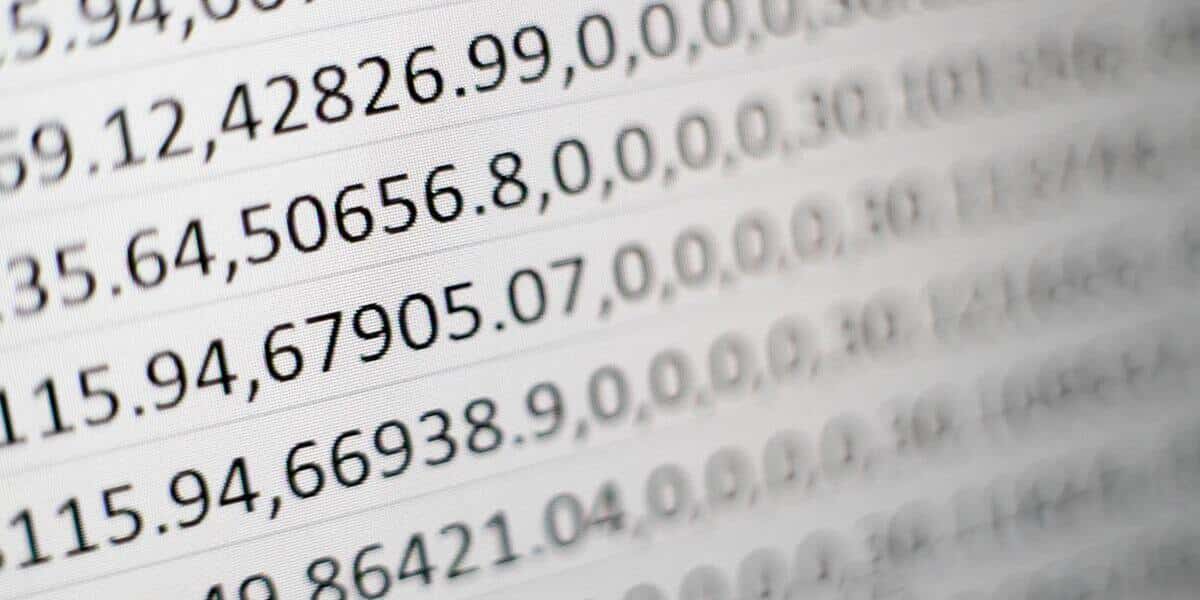

Unbalanced classification refers to classification tasks in which the number of examples in each class is unevenly distributed. Typically, unbalanced classification tasks are binary classification tasks in which the majority of examples in the learning dataset belong to the normal class and a minority of examples belong to the abnormal class.

Predictive modelling of classification

Predictive classification modeling algorithms are evaluated based on their performance. Classification accuracy is a popular measure used to evaluate model performance based on predicted class labels. The theclassification accuracy is not perfect, but it is a good starting point for many classification tasks.

Instead of class labels, some tasks may require predicting the probability of class membership for each instance. This provides additional uncertainty in the prediction that the application or user may then interpret.

There are four main types of classification tasks you may encounter; they are:

- Binary classification

- Multi-class classification

- Multi-character classification

- Unbalanced classification

Problems with unbalanced classification

The number of examples belonging to each class may be referred to as the class break. Unbalanced classification refers to a problem with classification predictive modeling where the number of examples in the training dataset for each class label is not balanced. That is when the class distribution is not equal or close to equal, but instead is skewed or skewed. Unbalancedclassification is a predictive classification modeling problem in which the distribution of examples across classes is not equal.

The challenges of unbalanced classification

There are 3 main problems arising from data with unequal class distributions. They are as follows:

Problem with the machine. Machinelearning algorithms are built tominimize errors. Since the probability ofcases belonging to the majority class issignificantly high in an unbalanceddataset, the algorithms are more likelyto classify new observations into themajority class.

Internal problem. In real life, the cost ofa false negative is usually much higherthan a false positive, but ML algorithmsimpose a penalty on both with similarweighting.

A human problem. Common practices in many industries are often established by experts rather than empirical research. This is certainly not the optimal solution.

Solutions

There are two different approaches todealing with unbalanced data: analgorithm-level approach and a data-level approach.

Algorithmic approach – ML algorithms equally penalize false positives and false negatives. A way to counter this is to modify the algorithm itself to increase the prediction performance of the minority class. This can be accomplished through recognition-based learning or cost-based learning.

Data-driven approach – involves resampling data to mitigate the effects caused by class imbalances. The data-driven approach has gained widespread acceptance among practitioners because it is more flexible and allows the use of the latest algorithms. The two most common techniques are taking too large and too small a sample.

- Excessive sampling increases the number of minority class members in the training dataset. The advantage of oversampling is that no information from the original training set is lost since all observations from minority and majorities are preserved. On the other hand, it is prone to overfitting.

- Scarcity, as opposed to over-sampling, aims to reduce the number of majority samples to balance the class distribution. Because it removes the observations from the original dataset, it may discard useful information.

Examples of unbalanced classification

Many classification problems can have severe class imbalances, but a look at common problem domains that are inherently unbalanced will make the ideas and challenges of class imbalance concrete.

- Fraud detection

- Anticipating claims

- Default forecast

- Anticipation of resignation

- Spam detection

- Anomaly detection

- Detection of outliers

- Intrusion detection

- Predicting conversions

Each of the problem domains represents an entire field of research where specific problems from each domain can be framed and investigated as unsustainable predictive classification modeling. This underlines the multidisciplinary nature of class unbalanced classification and why it is so important for the practitioner of machine learning to be aware of the problem and skillfully resolve it. In the face of unbalanced datasets, there is no single solution to improve the accuracy of the predictive model. You may have to try multiple methods to find the most appropriate sampling techniques for your dataset. Depending on the characteristics of the unsustainable dataset, the most effective techniques will differ. Relevant assessment parameters should be taken into account when comparing models.