TensorFlow – a free machine learning library for everyone.

15 January 2018

Most asked questions on Google in 2017

17 January 2018

Apache Spark is an open-source platform for processing large amounts of data based on speed, ease of use and advanced analytics. It was originally developed at UC Berkeley in 2009. It is the largest, open source data processing project.

Spark’s story

Since its launch, Apache Spark has been quickly adopted by enterprises from various industries. Online giants such as Netflix, Yahoo and eBay have implemented Spark on a massive scale, processing many petabytes of data together. Spark has quickly become the largest open source community in big data, with over 1,000 associates from over 250 organizations.

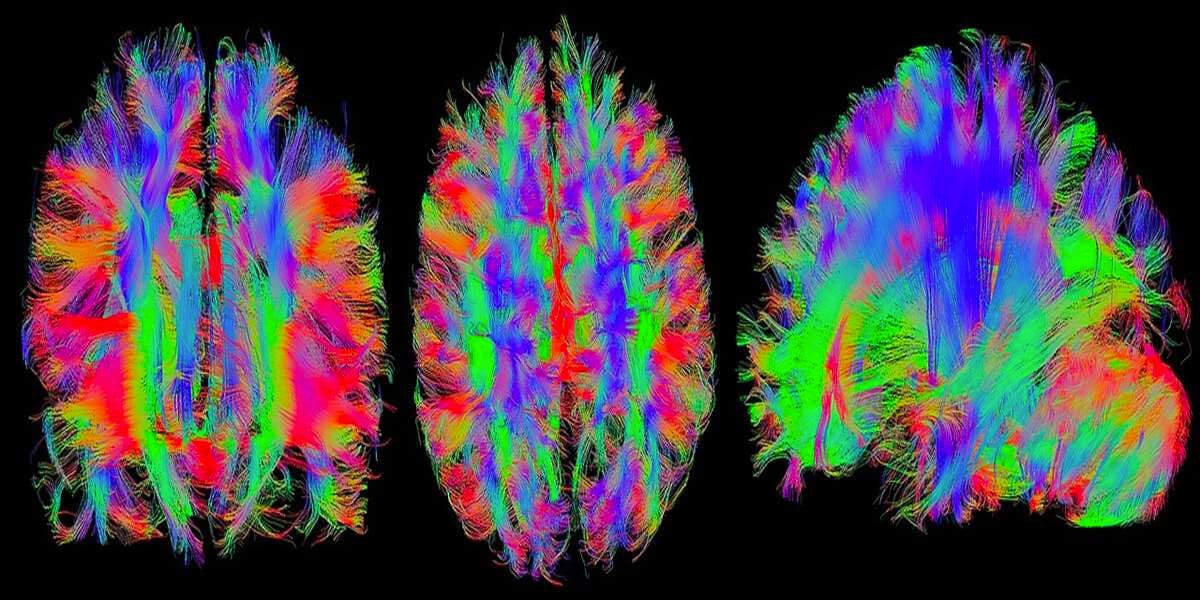

Spark is designed for data learning. Data scientists usually use machine learning – a set of techniques and algorithms that can learn from data. These algorithms are often iterative, and Spark’s ability to cache the data set in memory significantly speeds up such multiple data processing. This makes Spark an ideal processing mechanism for implementing such algorithms. Spark also contains the MLlib library, which provides a growing set of machine algorithms for common data learning techniques: classification, regression, group filtering, clustering and dimensional reduction.

Apache Spark advantages

Speed

Designed from scratch for performance, Spark can be 100 times faster than Hadoop for large-scale data processing by using memory and other optimizations. Spark is also fast when data is stored on disk, and currently has a world record for large-scale sorting on disk.

Ease of use

Spark has easy-to-use APIs for handling large data sets. This includes a collection of over 100 operators for transforming data and known data frame APIs to manipulate semi-structured data.

Unified engine

Spark is delivered with higher-level libraries, including support for SQL queries, stream data, machine learning, and chart processing. These standard libraries increase the productivity of programmers and can be seamlessly combined to create complex workflows.

Spark functions

Spark takes ”MapReduce” to the next level. With capabilities such as data storage in memory and real-time processing, performance can be several times higher than with other large datasets technologies. Spark supports and helps in optimizing steps in data processing workflows. Provides a higher level of API to improve programmer productivity and a consistent architect model for Big Data solutions. Spark stores intermediate results in memory, instead of writing them to disk, which is very useful, especially when you need to repeatedly work on the same data set. It has been designed as an executive engine that works both in memory and on the disk. Spark operators perform external operations when data does not fit into memory. Spark can be used to process data sets larger than the segregated memory in the cluster.

Spark will try to store as much data as it has memory, and then it will spill onto the disk. It can store part of the data set in memory and other data on the disk. Thanks to this data storage in the memory Spark has a performance advantage.

Other Sparks functions:

- It supports more than just mapping and reducing functions.

- Optimizes arbitrary operator graphs.

- Evaluation of big data queries, which helps in the optimization of the entire data processing.

- Provides concise and consistent APIs in Scala, Java and Python.

- Offers an interactive shell for Scala and Python. This is not yet available in Java.

Spark is written in the Scala programming language and works in the Java Virtual Machine (JVM) environment. It currently supports the following languages for application development using Spark: Scala, Java, Python, Clojure and R.

The wide range of Spark libraries and the ability to calculate data from many different types of data storage means that Spark can be used for many different problems in many industries. Digital advertising companies use them to store online campaign database and project campaigns tailored to specific customers. Financial companies use them to consume financial data and run models to target investment activities. Companies producing consumer goods use them to aggregate customer data and forecast trends, to guide inventory decisions and see new market opportunities.